Which GPU(s) to Get for Deep Learning: My Experience and Advice for Using GPUs in Deep Learning | Analytics & IIoT

![D] Which GPU(s) to Get for Deep Learning: My Experience and Advice for Using GPUs in Deep Learning : r/MachineLearning D] Which GPU(s) to Get for Deep Learning: My Experience and Advice for Using GPUs in Deep Learning : r/MachineLearning](https://external-preview.redd.it/ESD1BhcbiOwKLuPetUl_hjdOaknbwN1A6tjkxBMHCXY.jpg?width=640&crop=smart&auto=webp&s=89f3e68b690f7a4642b8cd71b924503e5011310d)

D] Which GPU(s) to Get for Deep Learning: My Experience and Advice for Using GPUs in Deep Learning : r/MachineLearning

Monitor and Improve GPU Usage for Training Deep Learning Models | by Lukas Biewald | Towards Data Science

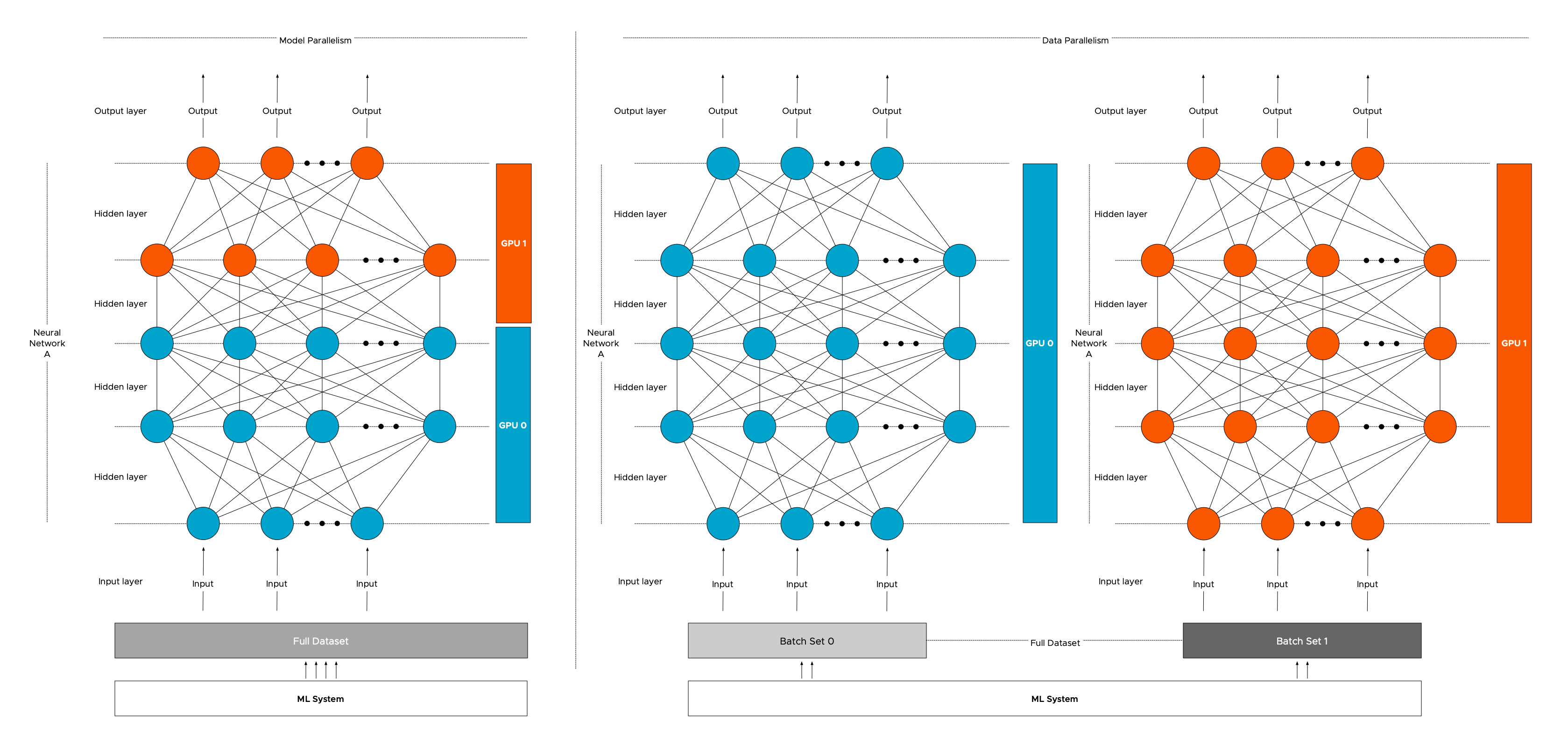

Sharing GPU for Machine Learning/Deep Learning on VMware vSphere with NVIDIA GRID: Why is it needed? And How to share GPU? - VROOM! Performance Blog

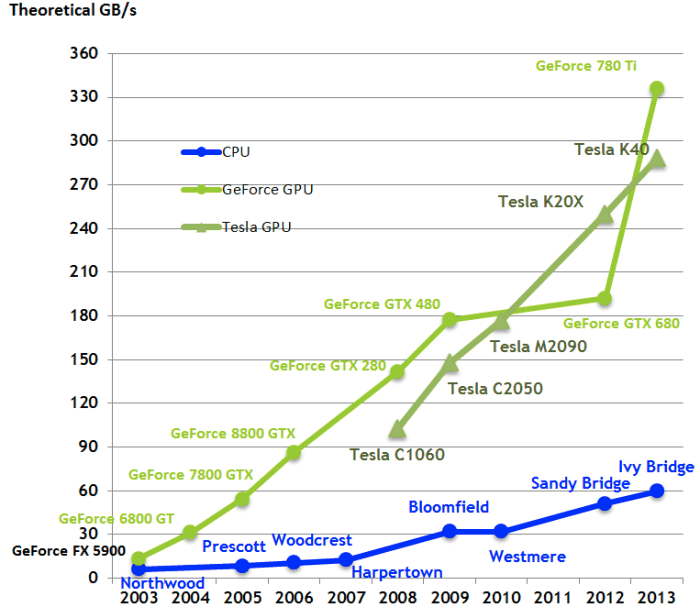

PNY Pro Tip #01: Benchmark for Deep Learning using NVIDIA GPU Cloud and Tensorflow (Part 1) - PNY NEWS

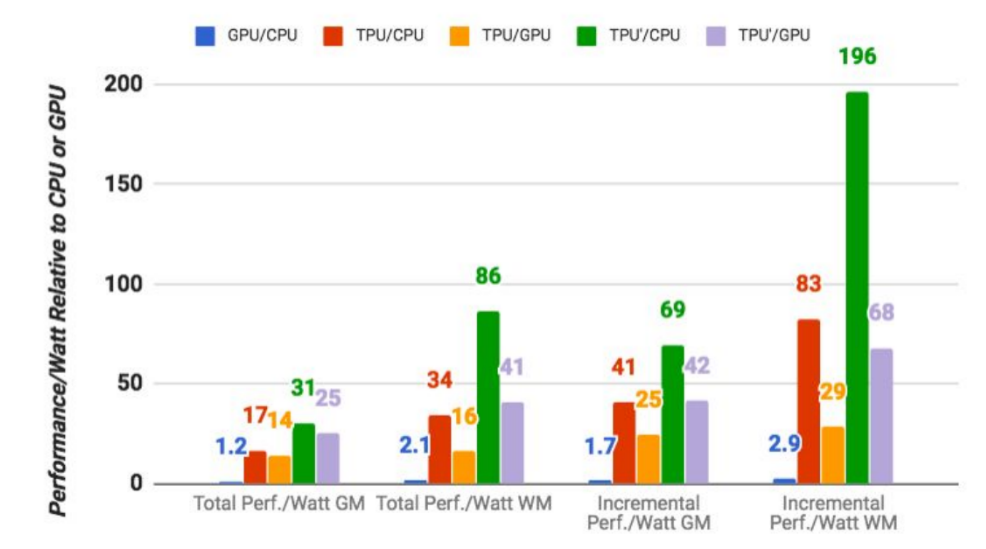

Google says its custom machine learning chips are often 15-30x faster than GPUs and CPUs | TechCrunch